Summary:

The ranking disparities among various agencies and publications raise doubt about validity and could lead to confusion for patients and their families.

The ranking disparities among various agencies and publications raise doubt about validity and could lead to confusion for patients and their families.

Lakeland Community Hospital CEO Debbie Pace said Haleyville residents appreciate the facility, owned by Curae Health since 2015. Consumers rated the hospital above average in the national Hospital Consumer Assessment of Healthcare Providers and Systems survey, which counts for 22 percent of the overall CMS grade. | Google Maps

The lone hospital in Haleyville, Alabama, had boasted since spring of an award for clean rooms, but in July 2016, it won bigger bragging rights. Lakeland Community, a 59-bed facility in a city of about 4,000 people, received the federal government’s new five-star quality rating.

This meant it landed the same overall grade as the Mayo Clinic in Rochester, Minnesota.

Such high honors eluded thousands of medical centers from coast to coast, including those lauded for years by established ratings programs. Small hospitals were suddenly king as the Centers for Medicare & Medicaid Services rolled out a tool to show consumers which providers did the best job delivering routine services.

RELATED: Mayo Clinic again earns top spot in U.S. News' annual hospital rankings.

But some were puzzled about the institutions that did not get top billing, or worse, were rated below average. Adding to the confusion, when U.S. News & World Report released its honor roll a week later, only one of CMS’ 102 five-star hospitals made the list. That was Mayo.

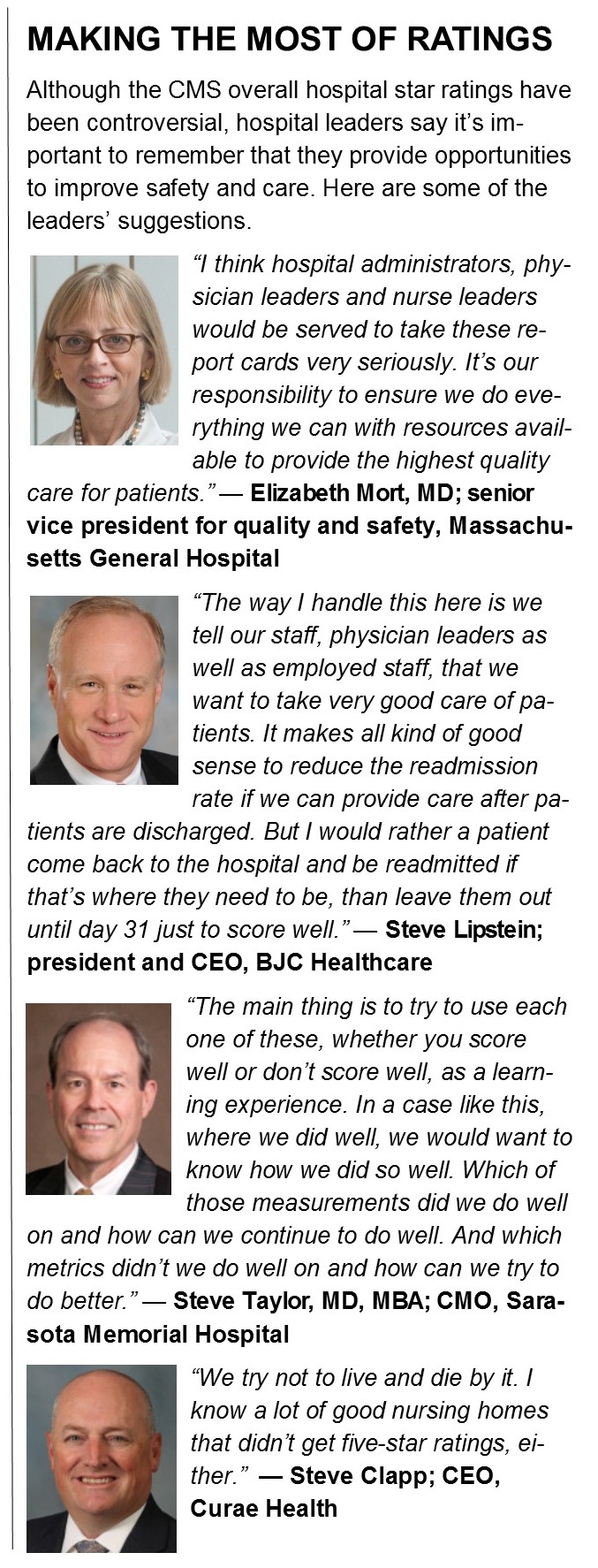

Massachusetts General — the Harvard teaching hospital that had been named No. 1 in the nation in 2015 by U.S. News — received four stars, one less than a nearby orthopedic center. “We weren’t particularly disappointed; nor were we particularly delighted,” said Elizabeth Mort, MD, senior vice president for quality and safety.

The two-star, below-average tier included Barnes-Jewish Hospital in St. Louis, Missouri, another honor roll member. “We already knew the methodology, quite frankly, lacked credibility,” BJC Healthcare president and chief executive officer Steve Lipstein said of the federal rating.

In the months that followed, both health care systems displayed the media ratings prominently on their home pages, leaving government ratings to the government.

Elsewhere, too, leaders of large safety net and teaching hospitals thickened their skin to the now-quarterly federal stars, unleashed in a second wave in October. They say their institutions are at a disadvantage, because CMS rated small hospitals on fewer metrics and did not adjust results to offset the at-risk populations that safety net hospitals serve.

Not everyone agrees with the criticism. Leah Binder, president and CEO of The Leapfrog Group, publicly supported the release of the star ratings, noting in a written statement that avoidable accidents and errors in U.S. hospitals kill more than 500 people a day.

“Health care data should never again be locked away in an ivory tower accessible only by providers or policymakers,” said Binder, whose company measures hospital safety. “Which hospital you choose can mean the difference between a quick recovery or a dangerous hospital-acquired infection; between feeling respected and cared for, or ignored and distrusted.”

Yet questions about the methodology left some physician leaders struggling with how to sell a report card to staff, when they did not respect it themselves. They knew the underlying data provided opportunities to improve, but the star rating was something else entirely.

“People, at their core, understand measurement and goals,” said Janis Orlowski, MD, chief health care officer for the Association of American Medical Colleges, which pushed unsuccessfully for changes before the ratings went public. “But when the measurement is not fair, they rail against it.”

SMALL AND NIMBLE

There was no railing in Haleyville. Word got out through a safety huddle and excitement spread through the staff. Five stars.

Lakeland Community makes its home in the birthplace of America’s first 911 call, a 1968 event celebrated each year with a festival. In lockstep, the hospital’s emergency department posts response times that beat counterparts nationally. Patients wait five minutes, not 20, to see a health care professional, CMS records show. The ones with broken bones get pain drugs in 18 minutes, not 53. Typically, patients are in and out in 66 minutes, not 115.

The city, once a hotbed for mobile home manufacturing, now has an aging population and a struggling economy, with a mean household income of about $25,000. Hospital CEO Debbie Pace said residents appreciate Lakeland Community, which Curae Health bought from LifePoint Health in 2015. Consumers rated the hospital above average in the national Hospital Consumer Assessment of Healthcare Providers and Systems survey, which counts for 22 percent of the overall CMS grade.

Pace described a nimble work environment, one where process can change in a day or two. She recalls when everyone pitched in, within 24 to 48 hours, to give respiratory therapists a spare supply closet on the third floor to improve response times. “Our role is to understand any barriers and remove them,” she said.

Still, the five-star CMS rating was at odds with how Lakeland Community had been characterized by other report cards.

U.S. News had given it a below-average score in treatment of heart failure (the 2017 score was average). It was unrated by Leapfrog and had no awards from Healthgrades. The hospital did better with _Consumer Report_s, which gave it a safety score of 75 (some top hospitals scored 81) and singled it out for avoiding infections and readmissions.

As was the case with many small or specialty hospitals, Lakeland Community’s CMS star rating was derived from data that in some respects was incomplete. The hospital doesn’t do total hip and knee replacements, so the question about complications didn’t apply; nor did the one about readmission after coronary artery bypass graft surgery. Under CMS rules, low-volume, limited-service hospitals such as Lakeland Community could be rated side-by-side with high-volume facilities offering more complex care, even if many measures were marked “not available.”

In the first round of ratings, 102 hospitals had five stars, 934 had four stars, 1,770 had three stars, 723 had two stars and 133 had one star. More than 900 could not be evaluated because of insufficient data.

The agency wanted to include as many hospitals as possible, Kate Goodrich, MD, MHS, of CMS told stakeholders during an August 2015 national provider call, according to a transcript. No two hospitals are the same, she said, but the rating system would attempt to make the most of the tremendous amount of data on the agency’s existing Hospital Compare website, to help consumers better interpret quality measures. As the underlying measures evolved, so would the ratings.

A hospital could be judged by anywhere from nine to 64 measures. That left busy, complex institutions with greater exposure.

“What we saw was the more metrics you reported on, the lower your score,” said Orlowski, the medical colleges’ representative.

Steve Clapp, CEO for Lakeland Community’s parent company, has worked at a large tertiary care hospital and knows the challenges. But that doesn’t mean small hospitals have it easy. “The flip side,” he said, “is if you’ve only got 10 or 15 measurable events and you miss one of the 10, you’ve got a 10 percent error rate.”

SAFETY NET UNCERTAINTY

When the ratings debuted to the public in July, hospitals balked at their bedfellows. A Boston Globe writer called the industry “as indignant as a poorly rated chef.” It was as if the lines had blurred between cafeterias, culinary schools and gourmet dining rooms, putting an egg salad sandwich on equal footing with a souffle.

Scores were calculated for seven groups of measures: mortality, safety of care, readmissions, patient experience, effectiveness of care, timeliness of care and efficient use of medical imaging. A hospital had to be represented in at least three groups to participate, with at least one group measuring outcome (mortality, safety of care, readmissions). The weighted computations prioritized outcome over process — meaning, while timeliness counted for 4 percent of the rating, the readmission rate was worth 22 percent.

Hospitals were already paying a price for readmission rates; in some cases, fines.

Critics suggest that the ratings, and a growing emphasis on pay for performance, could discourage hospitals from treating the poor.

“The way you could have a better score,” Orlowski explained, “is by not serving individuals who are poor, who have a low socioeconomic status, who are more complex. You don’t want to have a system that rewards people for driving away those individuals. And that’s the fundamental problem with this system.”

Lipstein of BJC Health concluded that a hospital’s patient base — often dictated by geography and economics — effectively determines its rating.

He sees hospitals in affluent communities scoring high while those across town struggle. He draws a contrast between patients discharged into economically stable home environments and those who return to decaying neighborhoods without pharmacies, grocery stores or the means to pay for help. The latter group, he knows, are more likely to call 911 and return.

“For somebody like me who has a system of 15 hospitals, I don’t believe the hospitals that got four stars are four-star hospitals more than I believe the ones that got two stars are two-star hospitals, from a quality of care perspective,” he said. “Without appropriate risk adjustment, you end up in a situation where you don’t have an equitable outcome.”

CMS tells stakeholders that it continues to monitor the impact of socioeconomic status on results, and it is working with the National Quality Forum on a two-year trial to test the inclusion of such factors.

Lipstein served on an NQF panel that recommended risk adjustment. He faults the government for failing to adequately heed the recommendations. Medicaid eligibility, he said, could be viewed in the same light as comorbidities such as diabetes and congestive heart failure.

He likens punishing hospitals in these scenarios to punishing teachers without considering the challenges faced when serving disadvantaged students.

“The other side of the argument,” Lipstein said, “is if you risk adjust for sociodemographic status, you will be accepting a lower standard of care or a poorer health outcome for people of that sociodemographic class than otherwise.”

His response: Acknowledge the differences, as in schools, but send more resources, not fewer.

A TEXAS PUZZLE

The geographical distribution of the five-star ratings was a curiosity from the beginning.

New York produced one. Florida initially produced two.

But Texas? Thirteen.

Those three states have nearly the same number of staffed beds (55,000 to 60,000) and similar numbers of patient days, according to the American Hospital Directory. But Texas beds are spread out over more hospital sites — 380 — compared with 197 in New York and 215 in Florida. (California has more beds and patient days but fewer hospitals than Texas and had nine five-star ratings.)

“I think that Texas has more ‘smaller hospitals’ because of its rural nature and thus fits into the category of ‘small hospitals report less metrics and have a higher chance,’ at five stars,” Orlowski said.

Some of the Texas winners were specialty hospitals, including the 18-bed Texas Spine & Joint Hospital in Tyler. Houston’s 1,220-bed Memorial Hermann Hospital System made the five-star list, along with several midsized hospitals around the state. Memorial Hermann-Texas Medical Center serves as a teaching hospital for the University of Texas Medical School.

A July 2016 CMS analysis found that hospitals of all sizes are capable of performing well in the star ratings. It produced examples of teaching hospitals and safety net hospitals that had achieved five stars. But teaching hospitals were twice as likely as nonteaching hospitals to score below average (one or two stars). And only 14 percent of safety net hospitals landed in the two highest tiers, compared with 26 percent of their counterparts.

“The federal government can identify a few safety net hospitals in the five-star category,” Lipstein said. “They’re not statistically significant. Sometimes the risk adjustment factors the federal government does use will overcompensate for socio-demographics. It’s analogous to a blind squirrel finding an acorn.”

Sarasota Memorial Hospital, south of Tampa on the west coast of Florida was one such acorn. The area is known for beach resorts, retirement communities, golf, dining and shopping, but over time it has seen some of the state’s wealthiest and poorest census tracts.

“We take all comers,” said Steve Taylor, MD, MBA, chief of medical operations. “Some of our patients unfortunately wait until they are critically ill to come to the emergency department. It’s challenging.”

The public hospital had 28,042 inpatient admissions last year and performed 19,918 surgeries.

The hospital board has authority to levy taxes and expects to collect $50 million in property tax revenues in 2017. Last year, it reported $13.5 million in traditional charity care, $7.6 million in indigent care fund payments and about $33 million in Medicare and Medicaid losses.

Sarasota Memorial was one of two hospitals in the state — the other was a Mayo Clinic in Jacksonville — to get a five-star CMS rating in July 2016. In the U.S. News’ reports in 2016 and 2017, Sarasota Memorial’s gastroenterology/GI surgery specialty was nationally ranked as high-performing, and nine procedures or conditions were also rated high-performing.

Taylor said he thinks CMS did a good job picking metrics that show a variety of aspects of care. But he was surprised to learn that only two hospitals in Florida received the highest grade. “There are others we consider a lot like us that didn’t do as well on this,” he said.

DONABEDIAN, ANYONE?

Back at the hospital that helps Harvard grow physicians, Mort conjures a name from a half-century ago, one that predates the U.S. News honor roll, Leapfrog, Healthgrades or the CMS stars: Avedis Donabedian, father of quality assurance.

While professor at the University of Michigan’s School of Public Health in 1966, he expressed what became known as the Donabedian model for evaluating the quality of health care by considering three elements: structure, process and outcome.

“In the last 10 years, there has been a proliferation of report cards that looked primarily at ‘process,’ ” Mort said, “and the ‘outcomes’ that have been included typically have been adverse outcomes.”

Structure is the word that’s missing, in her view. If CMS wants to help patients choose hospitals, she said, it would be useful to convey more about the context of the care provided. What about the intensive care units? Even for a simple operation like a total joint replacement, she said, a patient should look for a strong ICU environment.

The 999-bed Massachusetts General estimated that it admits 48,000 patients a year, performs 42,000 surgeries, handles 1.5 million outpatient visits and records 100,000 emergency room trips. U.S. News lists it as nationally ranked in 16 adult specialties, and has placed it among the top three hospitals in the nation for 18 of the past 25 years (fourth in 2017).

It isn’t shouting from the rooftop about four CMS stars.

Less than 5 miles away, there’s the 118-bed New England Baptist Hospital, an orthopedic hospital. U.S. News lists it as nationally ranked in one specialty: orthopedics. Meanwhile, it boasts on its homepage, “Only Hospital in Massachusetts to receive CMS Five Star Rating.”

Mort said it doesn’t worry her. Little separates a five-star rating from a four-star one. She focuses instead on the government-forced comparison of an orthopedic hospital with Massachusetts General.

“It sort of speaks to the limitations of the survey,” she said. “You shouldn’t be rating a specialty hospital next to a general hospital or a community hospital next to an academic medical center.”

CMS reminds consumers in its reports that teaching hospitals often provide services that may not be available at other hospitals. The agency says the measures used in the star ratings are measures of routine care.

Does the public stop to consider the nuances while star gazing?

Orlowski, of the medical colleges’ organization, said it’s too early to know whether people are backing away from hospitals based on CMS ratings. But she knows they’re confused. “We’re very aware,” she said, “of communities where the five-star hospitals have touted their five stars. It has put the larger hospital, which may have three stars or less, in a position of trying to explain to the public why the methodology is not fair, without sounding like they’re making excuses.”

Hometown papers picked up the stories when the first ratings were made public. Some tried to sort out the controversy; others just reported the numbers. Then along came U.S. News, with contradictory conclusions.

There may be little relief ahead.

“There will be star ratings coming out on a quarterly basis,” Orlowski said. “The concern is how to deal with a continual barrage of this information.”

Patty Ryan is a journalist for the Tampa Bay Times .

Topics

Quality Improvement

Healthcare Process

Economics

Related

“I Felt Violated”: What One Patient Complaint Taught Me About AI in the Exam RoomThe ER as America’s Mirror: 37 Years on the Front Lines with Dr. Louis ProfetaLabor and Delivery Coverage Models in a Changing Coding Environment: Implications for Coverage Economics, Compensation Design, and Governance